Preface: Embedded AI solutions on the Linux platform. With the superior performance, small size and low power consumption, it will be able to do more real-time processing at the demanding environment than ever before.

Background: NVIDIA® Jetson Nano™ Developer Kit is a small, powerful computer that lets you run multiple neural networks in parallel for applications like image classification, object detection, segmentation, and speech processing. All in an easy-to-use platform that runs in as little as 5 watts.

Jetson Board Support Package

• Linux Kernel: A UNIX-like computer operating system kernel mostly used for mobile devices.

• Sample Root Filesystem derived from Ubuntu: A sample root filesystem of the Ubuntu distribution. Helps you create root filesystems for different configurations.

• Toolchain: A set of development tools chained together by stages that runs on various architectures.

• Bootloader: Boot software boot for initializing the system on the chip.

• Sources: Source code for kernel and multimedia applications.

…..more

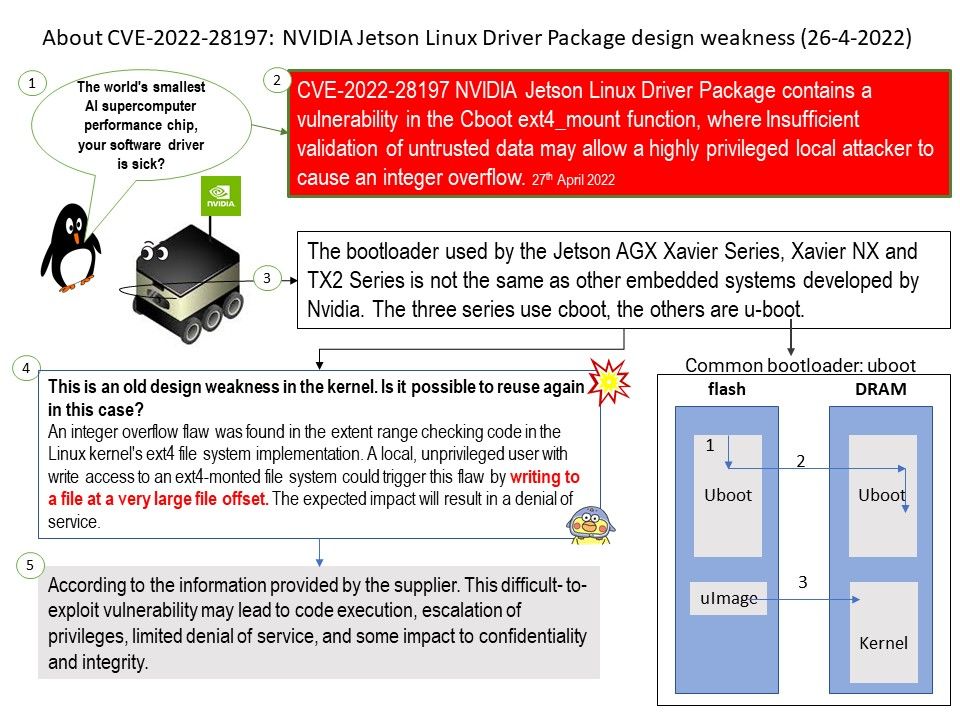

Vulnerability details: NVIDIA Jetson Linux Driver Package contains a vulnerability in the Cboot ext4_mount function, where Insufficient validation of untrusted data may allow a highly privileged local attacker to cause an integer overflow. This difficult- to-exploit vulnerability may lead to code execution, escalation of privileges, limited denial of service, and some impact to confidentiality and integrity.

Speculation: This is an old design weakness in the kernel. Is it possible to reuse again in this case?

An integer overflow flaw was found in the extent range checking code in the Linux kernel’s ext4 file system implementation. A local, unprivileged user with write access to an ext4-monted file system could trigger this flaw by writing to a file at a very large file offset. The expected impact will result in a denial of service.

Official announcement: NVIDIA has released a software update for NVIDIA® Jetson AGX Xavier™ series, Jetson Xavier™ NX, Jetson TX1, Jetson TX2 series (including Jetson TX2 NX) in the NVIDIA JetPack™ software development kit (SDK). Please refer to the link for details – https://nvidia.custhelp.com/app/answers/detail/a_id/5343

.jpg)

.jpg)

.jpg)