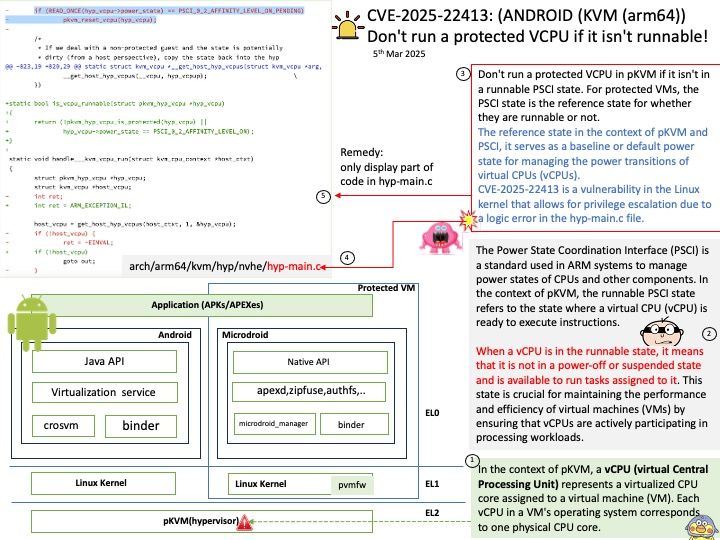

Preface: The protected Kernel-based Virtual Machine (pKVM) is an advanced virtualization technology built on top of the Linux Kernel-based Virtual Machine (KVM). It is designed to enhance security and isolation for virtual machines (VMs) running on Android devices.

Key points about pKVM:

Enhanced Security: pKVM restricts access to the payloads running in guest VMs marked as ‘protected’ at the time of creation. This ensures that even if the host Android system is compromised, the guest VMs remain secure.

Isolation: It provides strong confidentiality and integrity guarantees by isolating memory and devices into individual protected VMs (pVMs).

Compatibility: pKVM is compatible with existing operating systems and workloads that rely on KVM-based virtual machines.

Background: In the context of pKVM, a vCPU (virtual Central Processing Unit) represents a virtualized CPU core assigned to a virtual machine (VM). Each vCPU in a VM’s operating system corresponds to one physical CPU core.

In pKVM, vCPUs are used to manage and allocate processing power to protected virtual machines (pVMs), ensuring that each VM has the necessary resources to operate securely and efficiently.

Vulnerability details: Don’t run a protected VCPU in pKVM if it isn’t in a runnable PSCI state. For protected VMs, the PSCI state is the reference state for whether they are runnable or not.

Official announcement: Please refer to the link for details – https://android.googlesource.com/kernel/common/+/1a3366f0d3d9b94a8c025d9863edc3b427435c4c