Preface: Linux powers large parts of the Internet, cloud infrastructure, and supercomputers. But it is difficult to determine the exact number of Linux systems in the world. This appears to be a technology trend that includes AI system infrastructure.

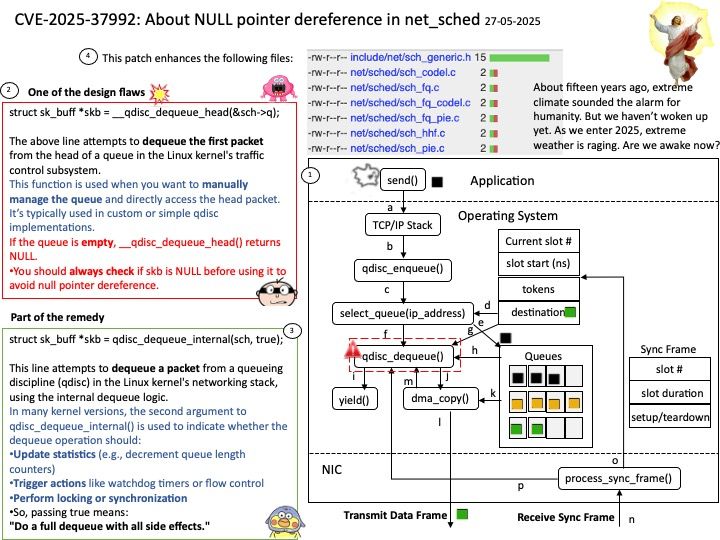

Background: In Linux, a “qdisc” stands for queueing discipline. It’s a core component of the Linux traffic control system, responsible for managing and scheduling network traffic on a per-interface basis. Essentially, a qdisc determines how the kernel handles packets before sending them to the network adapter.

Vulnerability details: Previously, when reducing a qdisc’s limit via the ->change() operation, only the main skb queue was trimmed, potentially leaving packets in the gso_skb list. This could result in NULL pointer dereference when we only check sch->limit against sch->q[.]qlen.

Remedy: This patch introduces a new helper, qdisc_dequeue_internal(), which ensures both the gso_skb list and the main queue are properly flushed when trimming excess packets. All relevant qdiscs (codel, fq, fq_codel, fq_pie, hhf, pie) are updated to use this helper in their ->change() routines.

Official announcement: Please see the link for details –