Preface: In smartphones, the System on Chip (SoC), such as those made by Qualcomm, integrates various components including the CPU, GPU, and memory. The embedded OS and applications run on this SoC, utilizing its built-in memory (RAM) for processing tasks.

The flash storage (often referred to as flashdisk) in smartphones is primarily used for storing persistent data like images, documents, apps, and the operating system itself. This storage is separate from the RAM used by the CPU and GPU for active processing

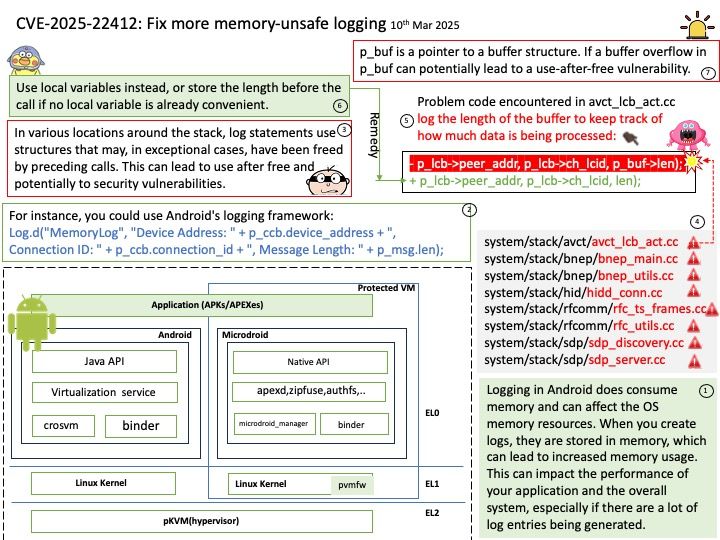

Background: Logging in Android does consume memory and can affect the OS memory resources. When you create logs, they are stored in memory, which can lead to increased memory usage. This can impact the performance of your application and the overall system, especially if there are a lot of log entries being generated.

Vulnerability details: In various locations around the stack, log statements use structures that may, in exceptional cases, have been freed by preceding calls. This can lead to use after free and potentially to security vulnerabilities.

Ref: p_buf is a pointer to a buffer structure. If a buffer overflow in p_buf can potentially lead to a use-after-free vulnerability.

Official announcement: Please refer to the link for details – https://android.googlesource.com/platform/packages/modules/Bluetooth/+/806774b1cf641e0c0e7df8024e327febf23d7d7c